Octopilot: An Instructor-Governed AI Coding Environment for Computer Science Education

Abstract

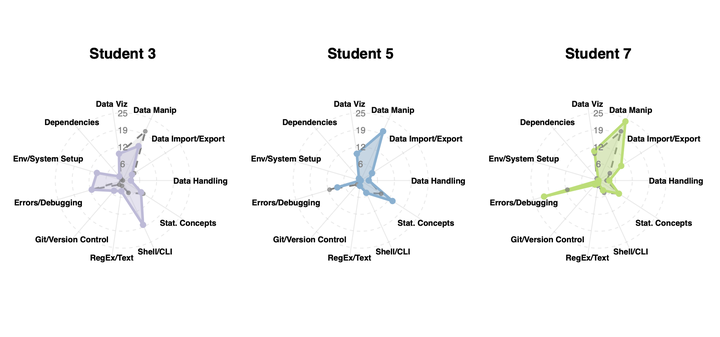

Generative AI tools have sparked both excitement and concern in education. Programming assistants like GitHub Copilot are among the earliest and most capable tools to emerge from recent advances in large language models. Yet despite their power, their productive use in classrooms remains unresolved, as they often provide shortcuts that conflict with learning objectives. This challenge stems in part from the difficulty instructors face in adapting and managing these tools for their courses. To address this gap, we built Octopilot, an instructor-controlled AI tool for computer science education that provides both control over AI behavior and visibility into student learning processes. Octopilot is easy to customize and deploy, integrates directly into students’ programming environments with education-friendly guardrails, and captures detailed telemetry on individual student progress. We present a pilot deployment in which 11 students engaged in over 1,200 chats with Octopilot across a median of 31 hours, revealing stark differences in how students allocated their time and the types of support they sought. A participatory design study demonstrated strong enthusiasm for tools like Octopilot, while also identifying opportunities for improvement. We show that instructor-controlled AI tools can support constructive classroom use and provide instructors with actionable insights into student learning processes—insights that reveal different aspects of student knowledge and learning gaps than outcome-based assessments alone.