How visualizing inferential uncertainty can mislead readers about treatment effects in scientific results 🏆

Abstract

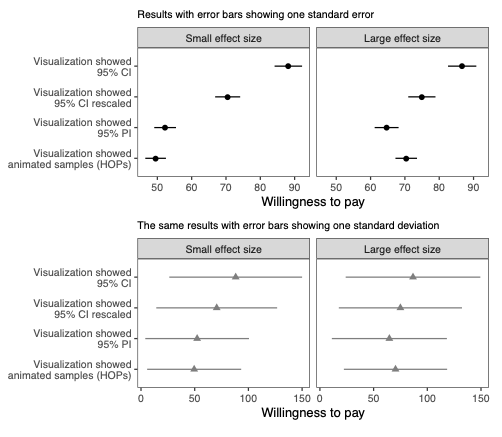

When presenting visualizations of experimental results, scientists often choose to display either inferential uncertainty (e.g., uncertainty in the estimate of a population mean) or outcome uncertainty (e.g., variation of outcomes around that mean) about their estimates. How does this choice impact readers’ beliefs about the size of treatment effects? We investigate this question in two experiments comparing 95% confidence intervals (means and standard errors) to 95% prediction intervals (means and standard deviations). The first experiment finds that participants are willing to pay more for and overestimate the effect of a treatment when shown confidence intervals relative to prediction intervals. The second experiment evaluates how alternative visualizations compare to standard visualizations for different effect sizes. We find that axis rescaling reduces error, but not as well as prediction intervals or animated hypothetical outcome plots (HOPs), and that depicting inferential uncertainty causes participants to underestimate variability in individual outcomes.